Evaluate retrieval at scale

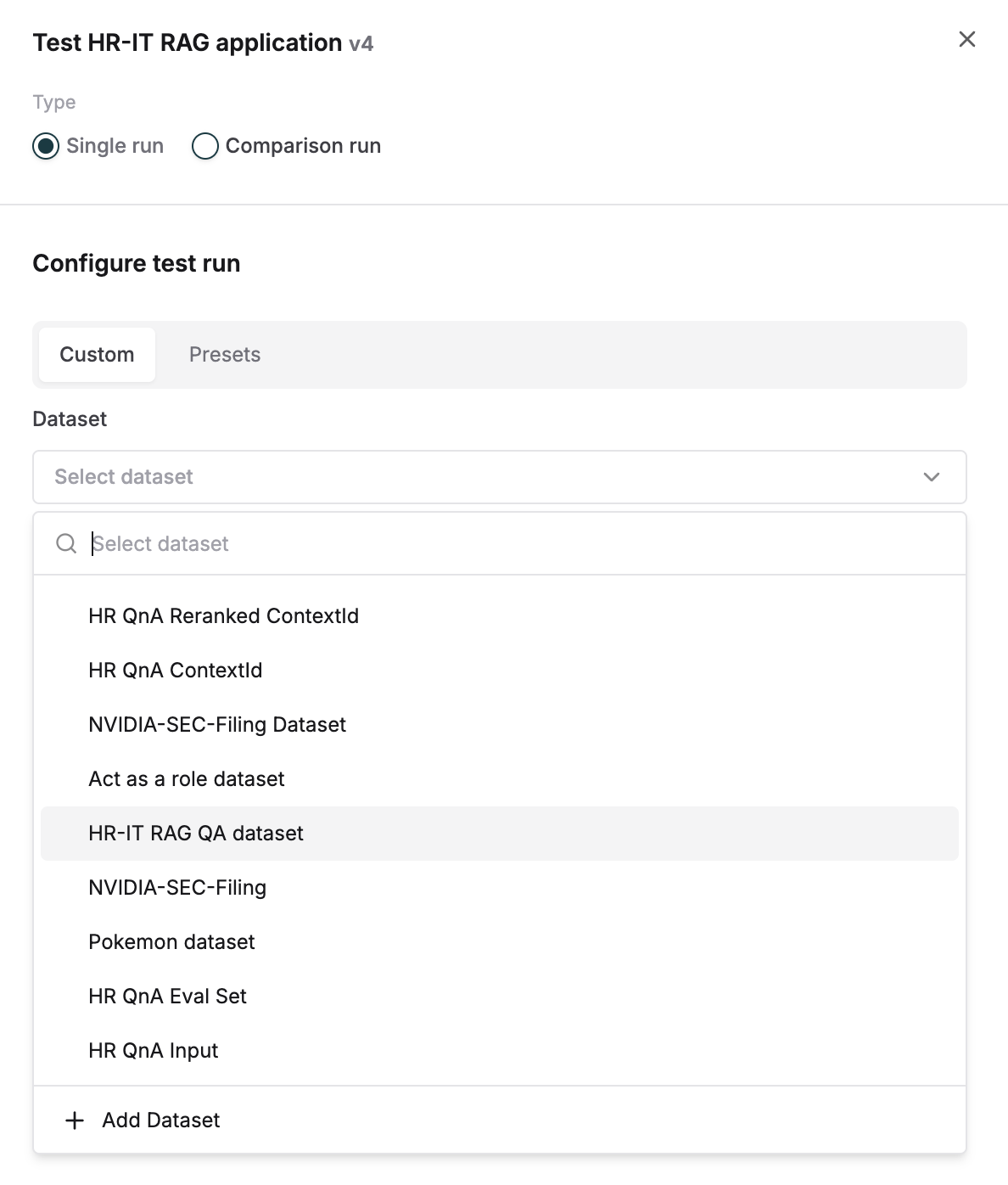

While the playground experience allows you to experiment and debug when retrieval is not working well, it is important to do this at scale across multiple inputs and with a set of defined metrics. Follow the steps given below to run a test and evaluate context retrieval.Initiate prompt testing

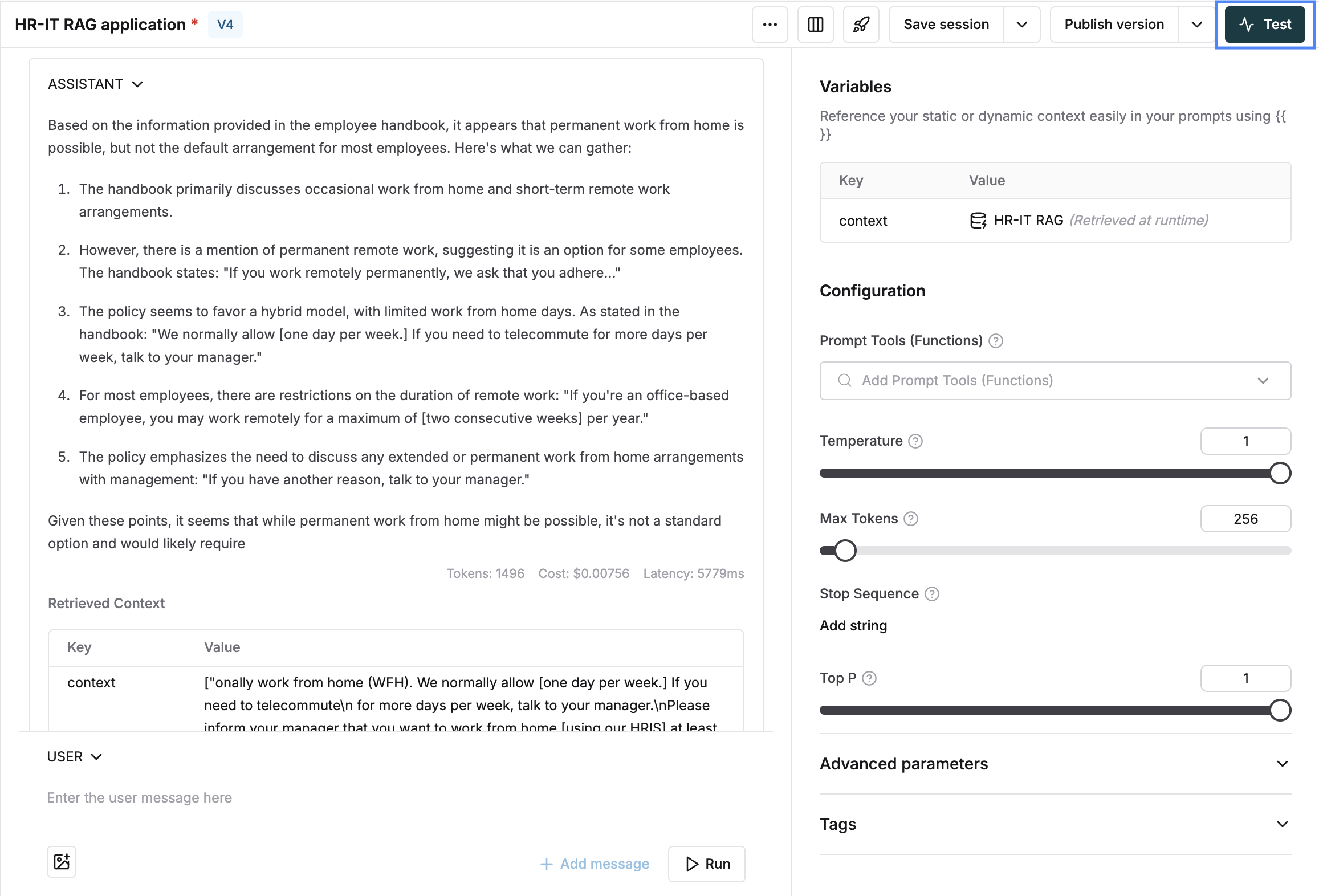

Click on test for a prompt that has an attached context (as explained in the previous section).

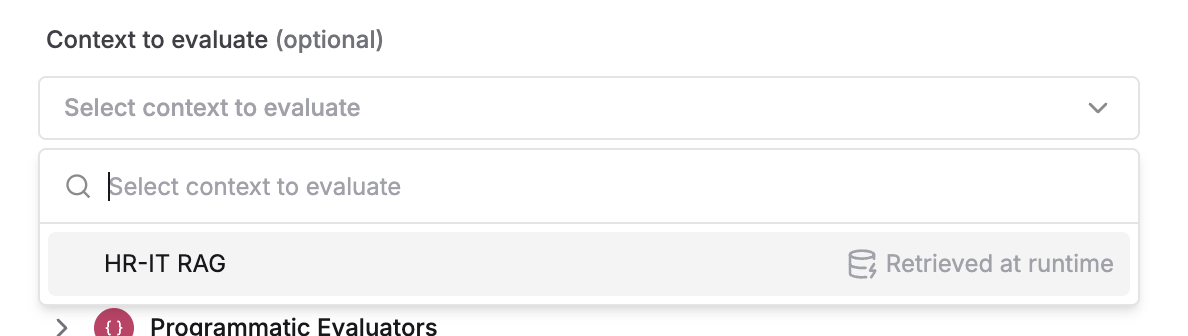

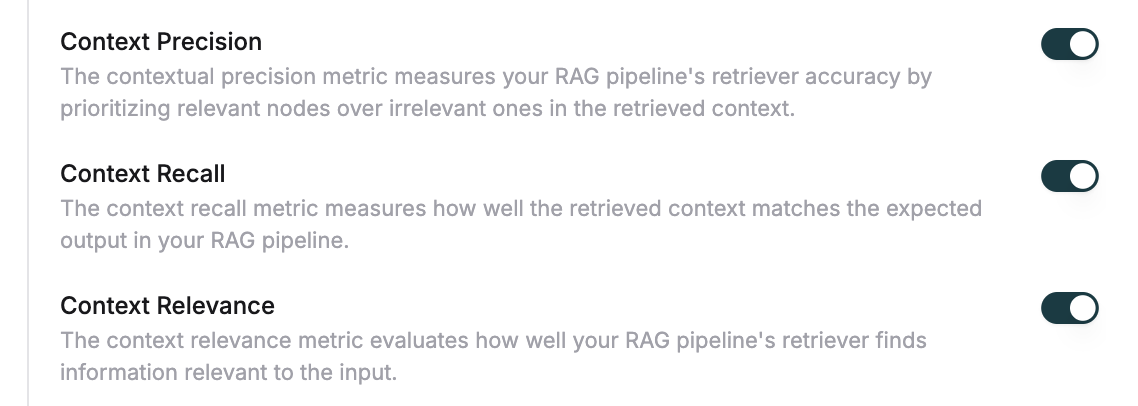

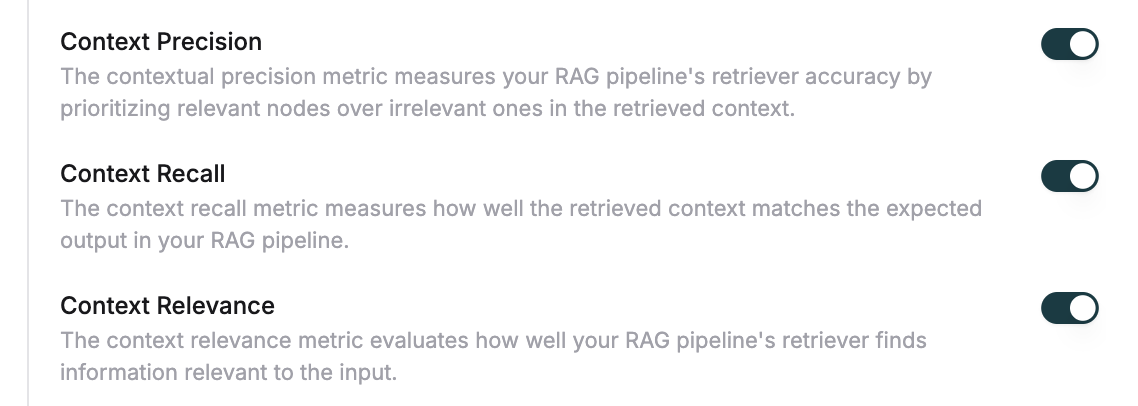

Add retrieval quality evaluators

Select context specific evaluators - e.g. Context recall, context precision or context relevance and trigger the test

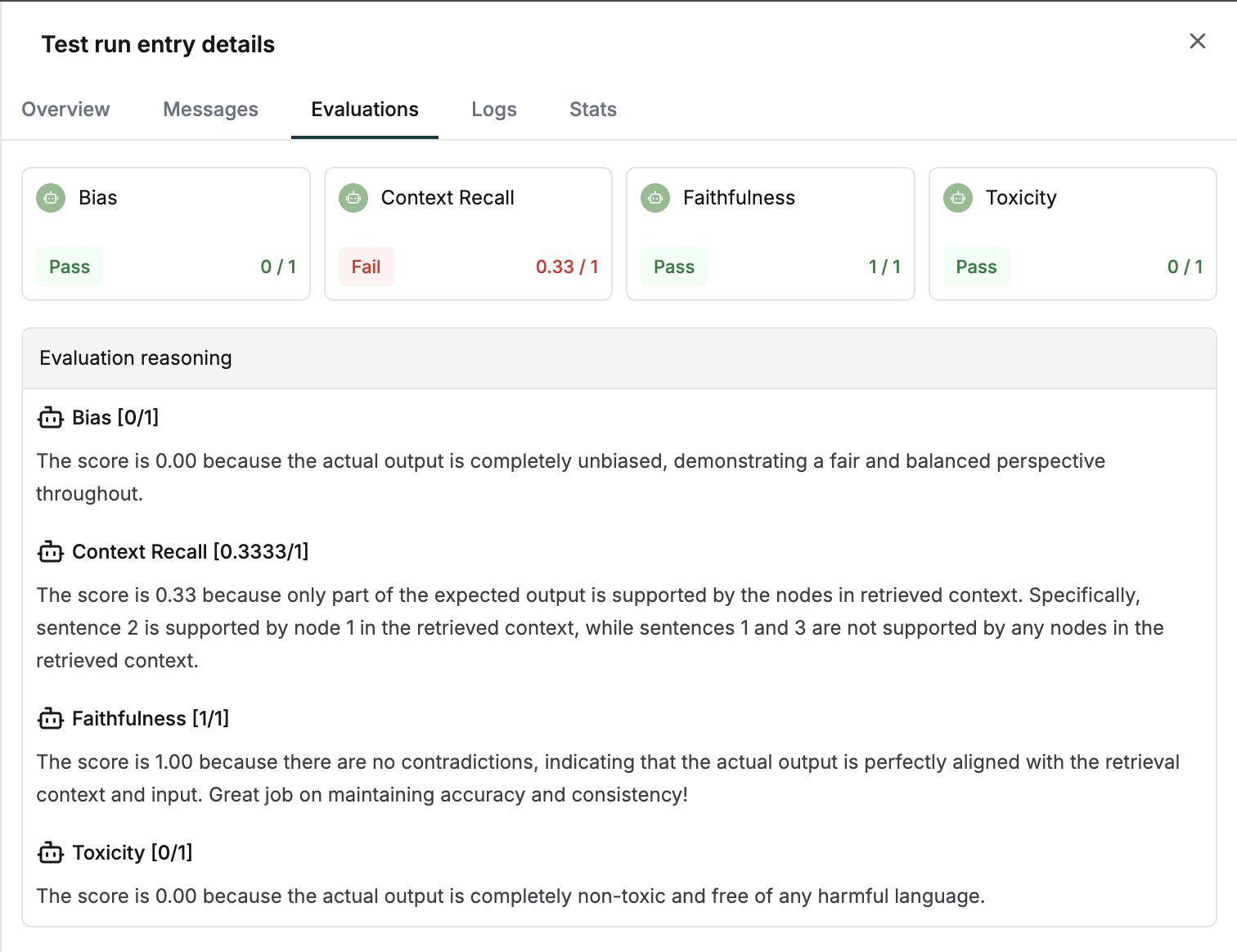

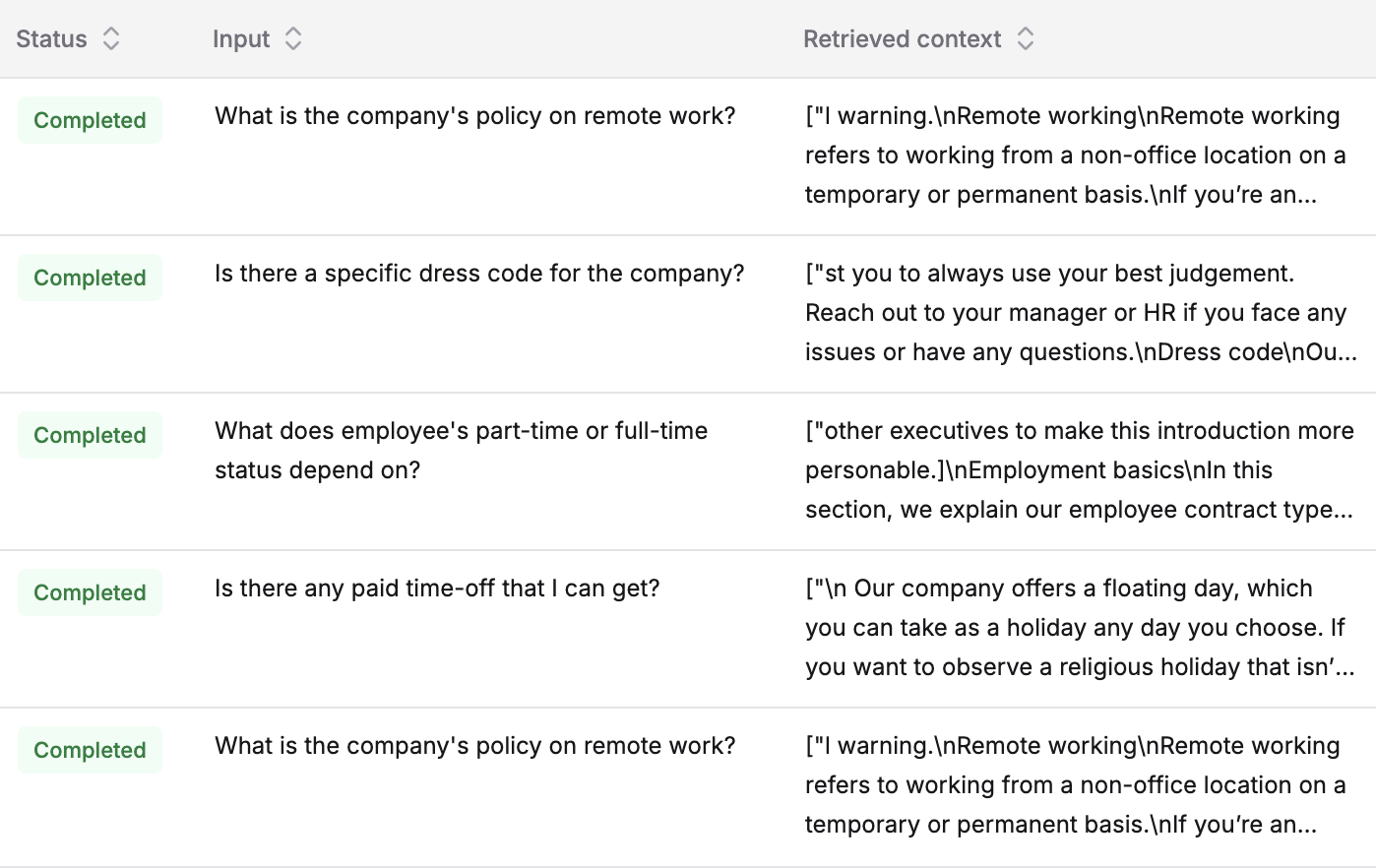

Review retrieved context results

Once the run is complete, the retrieved context column will be filled for all inputs.

Examine detailed chunk information

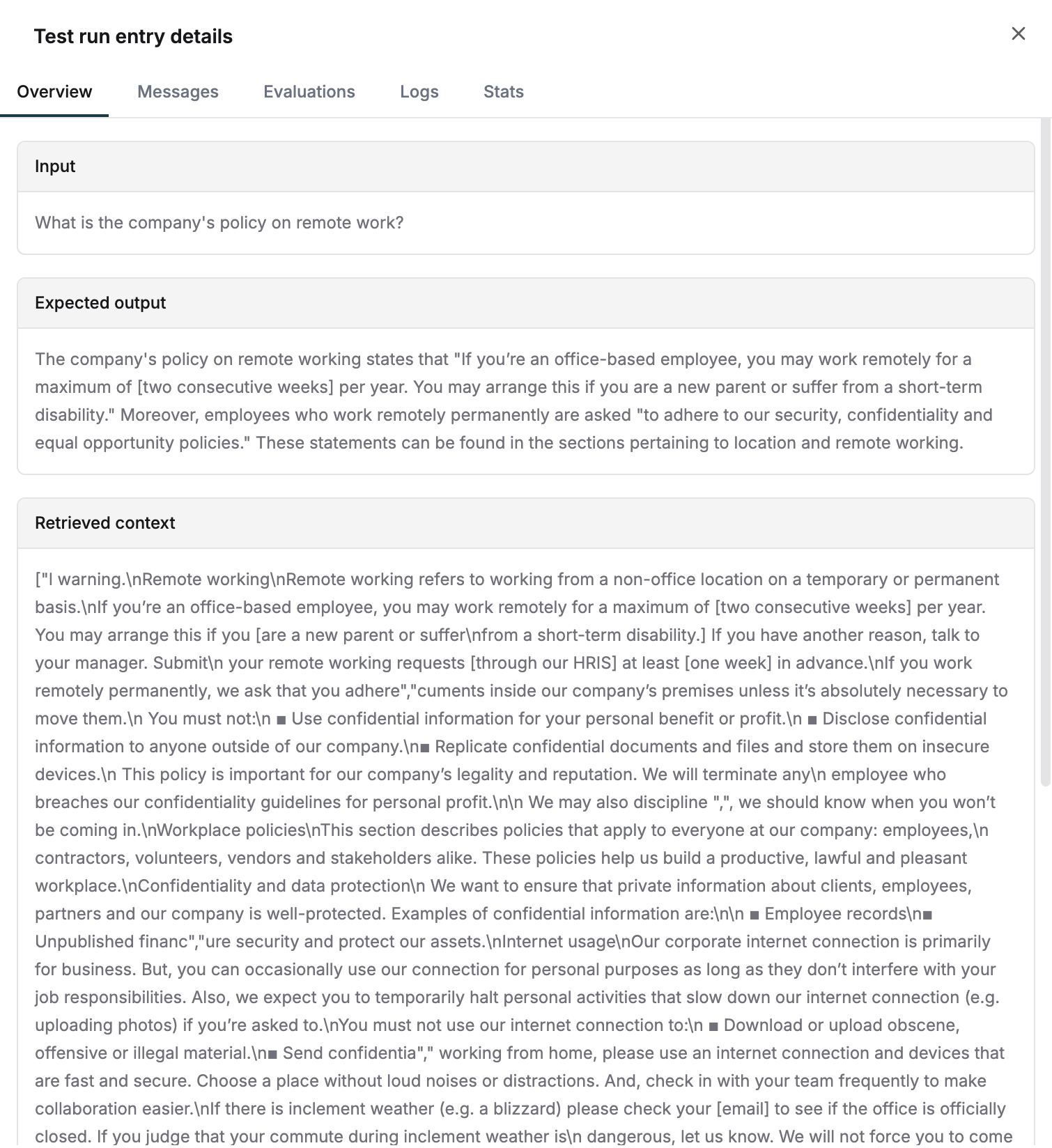

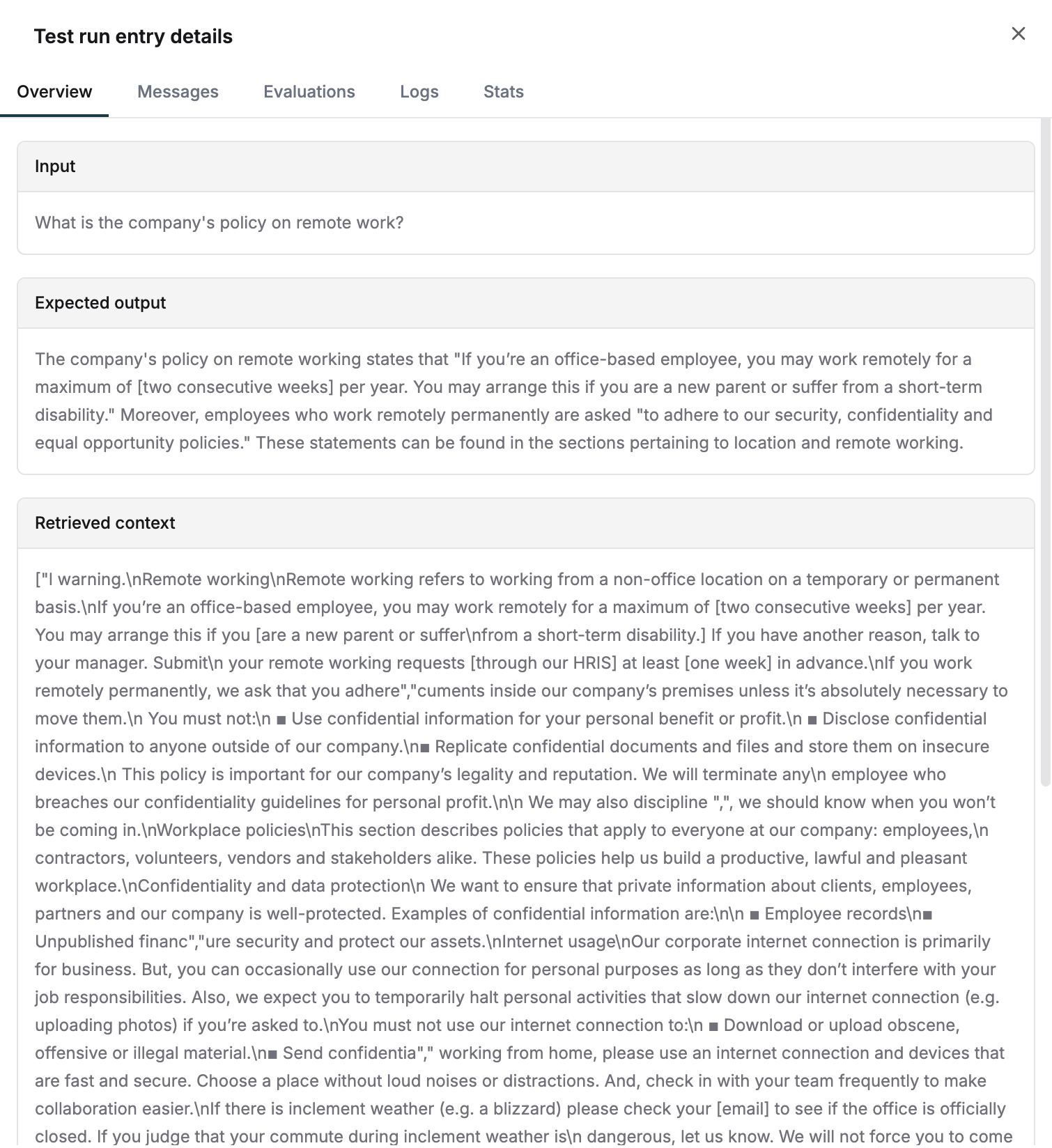

View complete details of retrieved chunks by clicking on any entry.