Components Of The Library

At Maxim, we have all the supporting components to aid you in your journey to ship high-quality AI reliably and with confidence. While Pre-release Tests and Post-release Observation are our hero flows for ensuring a smooth testing experience, we have added several crucial pieces under Library in Maxim to assist you with testing.

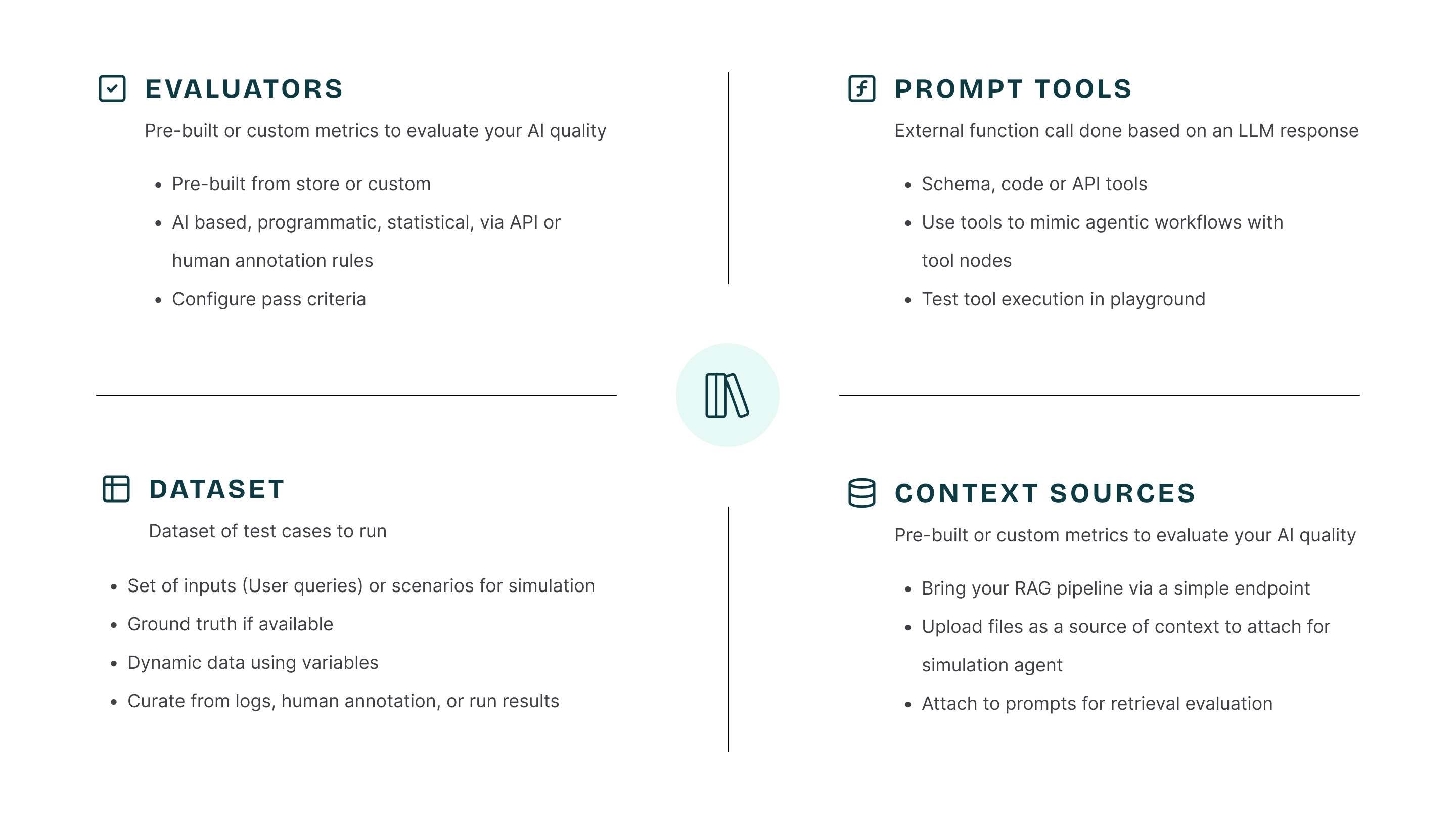

Evaluators

You will get access to all the evaluators under the Evaluators tab in the left-side menu. You will also have access to the Evaluator Store, where you can browse evaluators and add them to your workspace. Read more about evaluators here.Datasets

You can create robust multimodal datasets on Maxim, which you can then use for your testing workflow. Read more about datasets here.Context Sources

To test your RAG pipeline, it’s very important to evaluate the retrieved context along with the final generated output. We allow you to bring your retrieved context using an HTTP workflow. Read more about context sources here.Prompt Tools

Being able to attach function calling to prompts ensures you can test your actual application flow, mimicking agentic workflows in your real application. Read more about prompt tools here.Custom Models

At Maxim, you can create and update datasets, which continue evolving as you navigate through the application lifecycle. We allow you to use these datasets for your fine-tuning needs by partnering with fine-tuning providers. If you have such a need, please feel free to drop us a line at contact@getmaxim.ai.Schedule a demo to learn more about how to use library components to enhance your AI application testing workflow